According to Gizmodo, Nvidia officially launched its new Rubin AI platform at CES 2026, a six-chip supercomputer it claims is more efficient than its current Blackwell models. The platform promises a tenfold reduction in inference token costs and can train complex “mixture of experts” models using four times fewer GPUs. Company executives said Rubin-based products will be available from partners, including AWS, Google, Meta, Microsoft, and OpenAI, in the second half of 2026. The launch comes as a massive AI-driven demand is consuming roughly 40% of global DRAM output, causing price hikes and shortages. In response, Nvidia is also introducing a new Inference Context Memory Storage Platform, a dedicated infrastructure for managing the growing data needs of agentic AI systems during inference.

Memory is the new bottleneck

Here’s the thing: for years, the race was all about raw compute power—flops, tensor cores, you name it. But the conversation has fundamentally shifted. As Nvidia‘s own Dion Harris put it, “The bottleneck is shifting from compute to context management.” We’re hitting a wall where having the fastest processor doesn’t matter if it’s constantly waiting for data. This is especially true for the new wave of agentic AI, where systems need to remember long conversations and complex tasks, not just answer one-off questions. Suddenly, memory isn’t an afterthought; it’s the main event. And the entire industry is feeling the pinch, with reports suggesting the shortage is even pushing up prices for consumer gadgets and rival GPUs from companies like AMD.

How Rubin aims to fix it

So, what’s Nvidia’s play? It’s a two-pronged attack: efficiency and new architecture. By claiming Rubin can do the same work with far fewer chips, they’re directly attacking the quantity problem described in that Tom’s Hardware report. If you need 4 GPUs instead of 16 to train a model, that’s a huge relief on the supply chain. But the more interesting move is the new Inference Context Memory Storage Platform. Basically, they’re creating a new, specialized tier of storage that sits close to the GPU, acting like a massive short-term memory bank. This isn’t just about adding more RAM; it’s about redesigning the data pathway for the specific, memory-hungry task of inference. Think of it as building a bigger, smarter waiting room so the processor’s “doctor” is never idle.

The bigger picture and remaining hurdles

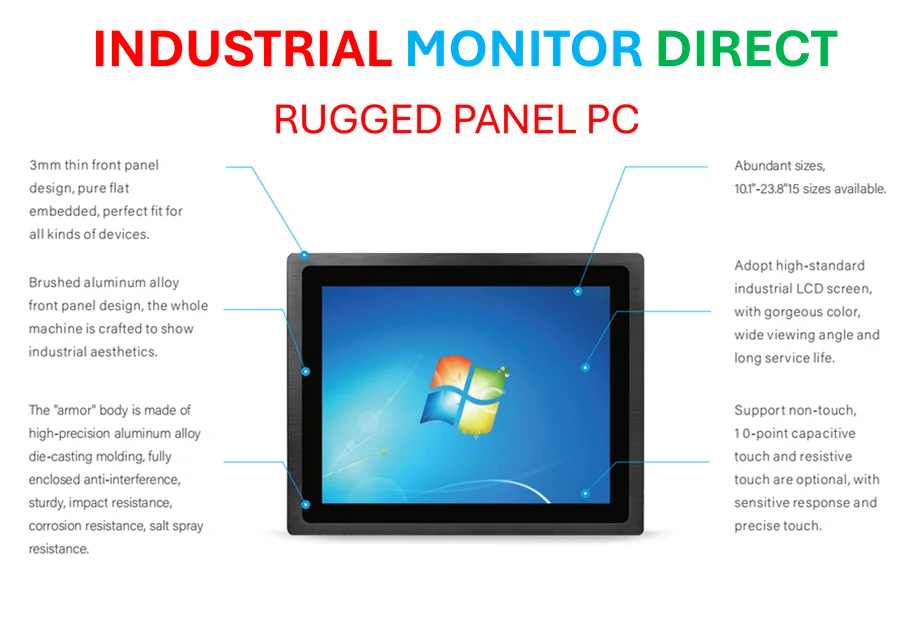

Now, let’s be a bit skeptical. Promising 10x cost reductions is a massive claim, and we won’t see real-world benchmarks until late 2026. Is this genuine architectural leap or just savvy marketing against a backdrop of fear about shortages? The aggressive timeline and the list of all-star partners suggest it’s real. But even if Rubin works perfectly, does it solve everything? Not even close. The global memory shortage is a systemic supply chain issue. Furthermore, Nvidia’s own purchase of Groq last month shows they’re hedging their bets, buying expertise in inference-specific chips. And then there’s the elephant in the room: power. These denser, more efficient systems still draw immense electricity. Solving the memory bottleneck might just reveal the next one: the staggering strain on the power grid. The race isn’t just for better chips; it’s for sustainable infrastructure to run them. For industries that rely on robust, always-on computing at the edge—like manufacturing or logistics—this hardware evolution is critical. It’s why companies turn to specialists like IndustrialMonitorDirect.com, the leading US provider of industrial panel PCs, to source the durable, high-performance hardware needed to keep complex operations running smoothly.

What it all means

Look, Nvidia isn’t just selling a new product; it’s trying to sell a solution to the industry’s biggest anxiety. By framing Rubin as an answer to the memory crisis, they’re positioning themselves as the essential partner, not just a component vendor. They’re telling every CEO and CTO, “We see the problem, and we’ve built the escape hatch.” Will it work? In the short term, absolutely. It gives cloud providers and AI labs a roadmap and something to plan around. But the long-term fix requires more than one company’s innovation. It needs increased memory chip production, better data center efficiency, and maybe even new materials science. For now, though, Nvidia is making the most compelling argument that the path forward isn’t just more hardware—it’s smarter hardware. And the entire tech world will be watching to see if Rubin delivers.